What is ExplainableAI, and Why is it Important to You?

by Lauralee Dhabhar, on Jan 10, 2023 10:53:44 AM

Every part of our daily lives is influenced by machine learning (ML) and artificial intelligence (AI). Algorithms show us what to watch, manage traffic flow in emergency rooms, help detect fraudulent bank transactions, and so much more. This technology has endless applications for everything from helping us make informed decisions to catching early warning signs of a health risk. But can it be trusted to show us the best, unbiased, and most accurate information possible?

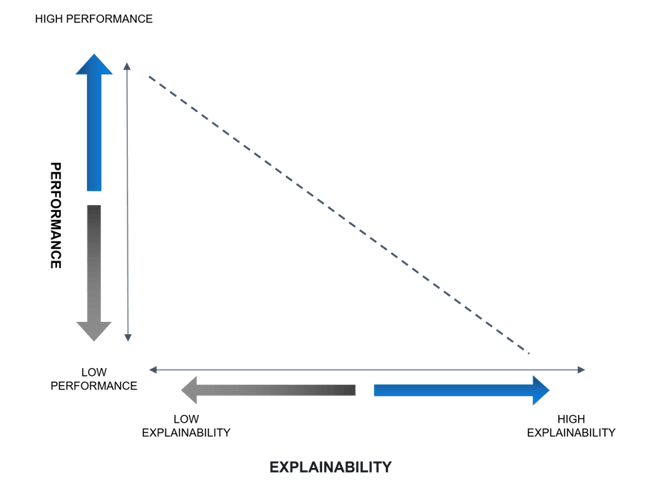

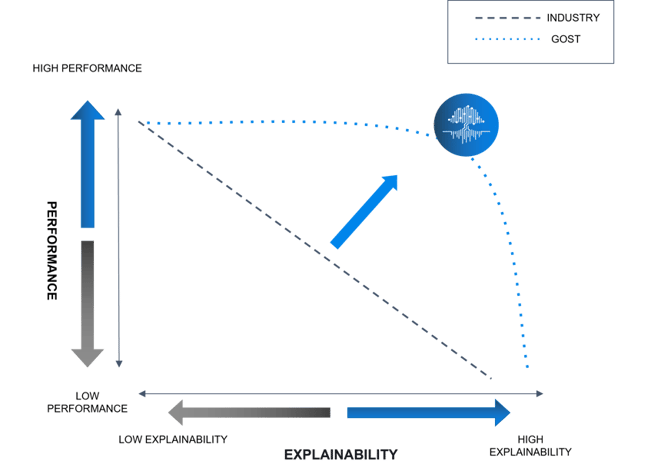

Much of the uncertainty about employing machine learning tools in high-stakes environments focuses on the question of how to know the results can be trusted. It’s a fair question. These technologies are extremely complex. So much so that the processes occurring between data entry and result production are often said to be obscured in the black box. Historically, the common belief was that we must accept a trade-off between understandability and performance.

However, as this technology becomes more ubiquitous, tools and frameworks are emerging to answer the following questions:

- Why did the model make a certain decision?

- What features affected a prediction?

- How confident is the model prediction?

- Why should I trust the model decision?

We call this explainability, and our scientists at Giant Oak work on it every day. Our science team’s mandate is to ensure GOST delivers efficient, accurate results without compromising explainability. They confront explainability on two levels, global and local.

Global: These are methods used to determine a general set of rules true for all predictions. This is the level of explainability most important to users and purchasing decision-makers.

Local: Local explainability methods are used by data scientists to derive why a model made a particular decision for a single set of data. This level of explainability is particularly important for machine-learning tools implemented in highly regulated industries. This information allows companies to justify why a certain decision was made.

As a decision maker for an organization, you want to know that the scientists and engineers behind your machine learning tool are applying frameworks to open the black box. These frameworks allow you and your vendor to address

- Accountability – When something goes wrong, we know how to fix it and ensure the error does not reoccur.

- Trust – Users can trust the results provided.

- Compliance – Explainability is a must to ensure a machine-learning tool is in line with business standards and government regulations.

- Future performance – Understanding how each element of the model works eases performance improvements

- Improved control over model outputs – Knowing the decision-making processes allows for agility in fine-tuning.

While it is not important to understand the complexities of all the possible explainability tools, it is a good idea to be familiar with names and general applications for some of the most commonly used.

SHAP (Shapley Additive Explanations) uses a game-theoretic approach and is used both globally and locally

LIME (Local Interpretable Model-Agnostic) attempts to replicate the output of a model through a series of local experiments

ELI5 (Explain Like I’m Five) is a Python package used to help debug algorithmic processes and explain predictions

WIT (What-if-Tool) – was developed by Google to test model performance in hypothetical situations

The above is only a handful of the dozens of tools available to data scientists and no one option provides a science team with all of the information they need to achieve explainability. Our scientists use all of the above and many more methodologies to ensure GOST is explainable AI.

To learn more about our current work on explainability, join us for our four-part webinar series. The first is available to view on demand now.