by Lauralee Dhabhar, on Dec 16, 2022 9:51:54 AM

by Lauralee Dhabhar, on Dec 16, 2022 9:51:54 AM

What is the Black Box?

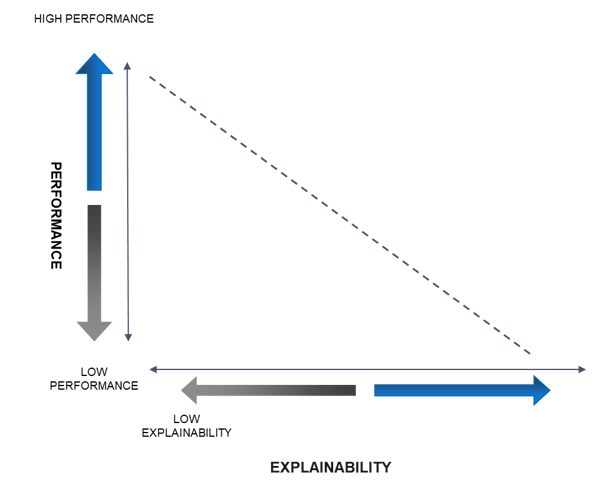

In the realm of artificial intelligence (AI) and machine learning (ML), the term black box refers to models that present results without a clear explanation of how the decision was reached. In other words, a user inputs data, something mysterious happens, and a result is delivered. Historically, the more advanced and complex the model, the less explainable are its results.

However, our science team at Giant Oak is dedicated to bringing explainability to GOST without sacrificing performance. Their work is allowing our users to fully understand and trust the results GOST delivers. Although these models are exceptionally complex, we know that understanding how they work is possible, even if you don't have a PhD in data science. This week we launched the first episode of a four-part series where we open the black box and invite you to look inside.

Giant Oak, Inc.

2300 Wilson Boulevard

Suite 700 PMB 142

Arlington, VA 22201

Email: info@giantoak.com

Phone: 703.842.0661